The AI field is evolving rapidly, and startups in 2025 face a pivotal choice when it comes to selecting the right machine learning models for their applications. Transformers and diffusion models are two of the most prominent architectures currently being explored. While transformers have been the backbone of many cutting-edge AI models, diffusion models are gaining attention for their potential in generative tasks like image and audio creation. In this article, we will compare transformers vs diffusion models, exploring what startups are choosing, the benefits of each, and how these models are being implemented in real-world applications.

What Are Transformers?

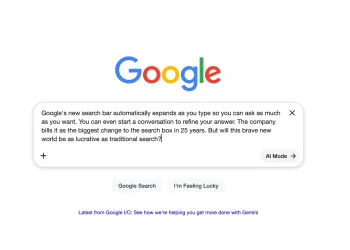

Transformers have become the go-to architecture for many state-of-the-art natural language processing (NLP) and generative AI models. Popularized by models like GPT-3 and BERT, transformers use a self-attention mechanism to capture relationships between words or data points, regardless of their position in a sequence. This makes them highly effective for tasks like text generation, machine translation, and question answering.

Transformers excel at handling sequential data and are known for their scalability, which has allowed models based on this architecture to process massive datasets and generate human-like outputs. However, transformers are computationally expensive, requiring significant computational resources for training and inference.

What Are Diffusion Models?

Diffusion models are a relatively newer architecture gaining traction in AI research and applications. They work by gradually transforming random noise into structured data through a diffusion process. The model learns to reverse the diffusion process, which allows it to generate high-quality data, such as images, from a starting point of noise.

Diffusion models have shown great promise in tasks like image generation, super-resolution, and denoising. Their ability to create photorealistic images from text prompts, for example, has led to significant interest in fields like computer vision and creative AI. While diffusion models have a different operational approach than transformers, they offer unique advantages in specific use cases.

Transformers vs Diffusion Models: Key Differences

1. Use Cases and Applications

- Transformers: These models excel in tasks that involve sequential data. They are widely used in NLP, speech recognition, translation, and even code generation. Transformers are also powerful for time-series forecasting and general machine learning tasks that require capturing long-range dependencies in data.

- Diffusion Models: Diffusion models, on the other hand, are primarily used in generative tasks, particularly in image and audio generation. Their ability to synthesize realistic images and videos from noise has made them popular in creative AI applications, such as digital art generation, video editing, and virtual content creation.

Startups focusing on text-based applications are more likely to choose transformers, while those involved in creative AI and computer vision are turning to diffusion models for their generative capabilities.

2. Computational Efficiency

- Transformers: Despite their effectiveness, transformers are computationally expensive. The large number of parameters and the attention mechanism requires powerful hardware and large datasets to train. As a result, startups with limited computational resources may find transformers challenging to implement at scale.

- Diffusion Models: While diffusion models are computationally intensive as well, they tend to be more efficient than transformers in certain generative tasks. They are able to produce high-quality images and other outputs with fewer training iterations. However, they still require substantial resources for fine-tuning and generating high-resolution content.

Why Startups Are Choosing Transformers

- Proven Success in NLP: Transformers have demonstrated their effectiveness in NLP tasks and have become the backbone of popular AI models like GPT-4 and BERT. Startups that are developing chatbots, customer service automation tools, or sentiment analysis systems are more likely to adopt transformers.

- Scalability: Transformers have shown immense scalability, allowing them to process and generate large amounts of text-based data quickly. This makes them ideal for startups aiming to handle large-scale data processing tasks like content generation, social media monitoring, and data-driven marketing.

- Pretrained Models: The availability of pretrained transformer models (such as GPT-3, T5, and BERT) makes it easier for startups to implement powerful AI systems without the need for training from scratch. This drastically reduces the time and cost involved in deploying AI models.

- Flexibility: Startups that need to process multiple types of data (text, images, video) can use transformers in combination with other models, leveraging their versatility. The ability to fine-tune pretrained transformers for specific tasks also adds to their appeal.

Why Startups Are Choosing Diffusion Models

- Generative Capabilities: Startups working in creative industries are particularly drawn to diffusion models. Their ability to generate realistic images, videos, and other media from scratch or from minimal input (such as a text prompt) makes them a powerful tool for applications like digital art generation, product design, and virtual worlds.

- Photorealism: Diffusion models have been proven to create highly realistic, photorealistic images, making them particularly useful for startups involved in marketing, advertising, and media creation. The models can generate high-quality imagery from conceptual descriptions, allowing startups to create unique visual content quickly.

- Advancements in Computer Vision: Diffusion models are being adopted by startups working in computer vision for tasks like image denoising, super-resolution, and video generation. Their ability to generate fine-grained details from noise makes them ideal for visual applications that require high fidelity.

- Emerging Technologies: Diffusion models are still in their early stages compared to transformers, but their potential for audio generation, style transfer, and 3D modeling is drawing attention. Startups exploring new AI-driven technologies are likely to invest in these models as they continue to evolve.

Which Model Are Startups Choosing in 2025?

While transformers remain the dominant architecture for text-based AI applications in 2025, diffusion models are rapidly gaining popularity in the creative industries. Startups in fields like graphic design, advertising, film production, and game development are increasingly relying on diffusion models for their generative capabilities.

Transformers are still the preferred choice for startups focused on data analysis, NLP, and customer engagement. Their ability to process large amounts of structured data efficiently ensures they will continue to be used in a wide range of AI applications for the foreseeable future.

Conclusion

Both transformers and diffusion models offer distinct advantages, and startups in 2025 must carefully choose between them based on their specific needs. Transformers excel in text generation, machine translation, and NLP tasks, making them ideal for startups focusing on data-driven applications. On the other hand, diffusion models are transforming creative AI applications by generating highly realistic images, videos, and audio content.

Startups should assess their resources, goals, and industry demands to determine the best AI architecture for their needs. As the AI landscape continues to evolve, it’s likely that we will see more hybrid models combining both transformer and diffusion technologies to deliver even more powerful and versatile AI solutions.